AI photoshopping is about to get very easy. Maybe too easy

Photoshop is the granddaddy of image-editing apps, the O.G. of our airbrushed, Facetuned media ecosystem and a product so enmeshed in the culture that it’s a verb, an adjective and a frequent lament of rappers. Photoshop is also widely used. More than 30 years since the first version was released, professional photographers, graphic designers and other visual artists the world over reach for the app to edit much of the imagery you see online, in print and on billboards, bus stops, posters, product packaging and anything else the light touches.

So what does it mean that Photoshop is diving into generative artificial intelligence – that a just-released beta feature called Generative Fill will allow you to photorealistically render just about any imagery you ask of it? (Subject, of course, to terms of service.)

Not just that, actually: So many AI image generators have been released over the past year or so that the idea of prompting a computer to create pictures already seems old hat. What’s novel about Photoshop’s new capabilities is that they allow for the easy merger of reality and digital artifice and they bring it to a large user base. The software allows anyone with a mouse, an imagination and $10 to $20 a month to – without any expertise – subtly alter pictures, sometimes appearing so real that it seems likely to erase most of the remaining barriers between the authentic and the fake.

The good news is that Adobe, the company that makes Photoshop, has considered the dangers and has been working on a plan to address the widespread dissemination of digitally manipulated pics. The company has created what it describes as a “nutrition label” that can be embedded in image files to document how a picture was altered, including if it has elements generated by artificial intelligence.

The plan, called the Content Authenticity Initiative, is meant to bolster the credibility of digital media. It won’t alert you to every image that’s fake but instead can help a creator or publisher prove that a certain image is true. In the future, you might see a snapshot of a car accident or terrorist attack or natural disaster on Twitter and dismiss it as fake unless it carries a content credential saying how it was created and edited.

“Being able to prove what’s true is going to be essential for governments, for news agencies and for regular people,” Dana Rao, Adobe’s general counsel and chief trust officer, told me. “And if you get some important information that doesn’t have a content credential associated with it – when this becomes popularized – then you should have that skepticism: This person decided not to prove their work, so I should be skeptical.”

The key phrase there, though, is “when this becomes popularized.” Adobe’s plan requires industry and media buy-in to be useful, but the AI features in Photoshop are being released to the public well before the safety system has been widely adopted. I don’t blame the company – industry standards often aren’t embraced before an industry has matured, and AI content generation remains in the early stages – but Photoshop’s new features underscore the urgent need for some kind of widely accepted standard.

We’re about to be deluged – or even more deluged than we already are – with realistic-looking artificial pictures. Tech companies should move quickly, as an industry, to put in place Adobe’s system or some other kind of safety net. AI imagery keeps getting more refined; there’s no time to waste.

Indeed, a lot of recent developments in AI have elicited the same two reactions from me, in quick succession:

Amazing! What a time to be alive!

Arghhhh! What a time to be alive!

That’s roughly how I felt when I visited Adobe’s headquarters last week to see a demo of Photoshop’s new AI features. I later got to use the software, and while it’s far from perfect at altering images in ways that aren’t detectable, I found it good enough often enough that I suspect it will soon be widely used.

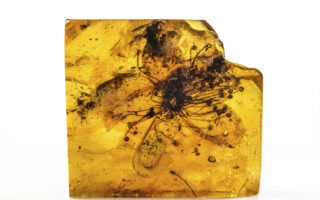

An example: On vacation in Hawaii this year (a tough life, I know), I snapped a close-up photo of a redheaded bird perched on an outdoor dining table. The picture is fine, but it lacks drama. The bird is just sitting there flatly, as birds do.

In the new Photoshop, I drew a selection box around the table and typed in “a man’s forearm for the bird to perch on.” Photoshop sent my picture and the prompt to Firefly, the AI image-generation system that Adobe released as a Web app this year. After about 30 seconds of processing time, my picture was altered: The wooden table had been turned into an arm, the bird’s feet pretty realistically planted on the skin.

As you can imagine, I lost many hours experimenting with this. Photoshop offers three initial options for each request (the other choices for my perching bird had one much hairier arm and one much more muscular, but both looked a bit unnatural) and if you don’t like any of them, you can ask for more. Sometimes the results aren’t great: It’s bad at creating images of people’s faces – right now, they look strange – and it fails at delivering on very precise requests: When I didn’t specify a skin color, the forearms it gave me for the bird to perch on were all fair; when I asked for a brown arm to match my skin tone, I got back images that didn’t look very realistic.

Still, I was frequently staggered by how well Photoshop responded to my requests. Items it added to my photos matched the context of the original; the lighting, scale and perspective were often remarkably on target.

By default, images that you create with the Web version of Firefly are embedded with Adobe’s content credentials disclosing that they were generated by AI. But in this beta version, Photoshop doesn’t automatically embed this tag. You can turn on the credential, but you don’t have to. Adobe says that the tag will be required on images that use generative AI when the feature comes out of beta. Requiring this will be essential – without it, any lofty plans Adobe has to maintain the line between genuine and phony images won’t be very successful.

But even if you do attach a credential to your photo, it won’t be of much use just yet. Adobe is working to make its content authenticity system an industry standard, and it has seen some success – more than 1,000 tech and media companies have joined the initiative, including camera makers like Canon, Nikon and Leica; tech heavyweights like Microsoft and Nvidia; and many news organizations, such as The Associated Press, the BBC, The Washington Post, The Wall Street Journal and The New York Times. (In 2019, Adobe announced that along with the Times and Twitter it was starting an initiative to develop an industry standard for content attribution.)

When the system is up and running, you might be able to click on an image published in the Times and see an audit trail – where and when it was taken, how it was edited and by whom. The feature would even work when someone takes an authentic image and alters it. You could run the altered pic through the content credential database, and it would tell you which true image it was based on.

But while many organizations have signed on to Adobe’s plan, to date, not many have carried it out. For it to be maximally useful, most if not all camera makers would have to add credentials to pictures at the moment they’re taken, so that a photo can be authenticated from the beginning of the process. Getting such wide adoption among competing companies could be tough but, I hope, not impossible. In an era of one-click AI editing, Adobe’s tagging system or something similar seems a simple and necessary first step in bolstering our trust in mass media. But it will work only if people use it.

This article originally appeared in The New York Times.